TL;DR:

- Data analytics is transforming government decision-making, leading to measurable improvements in efficiency and safety.

- Successful analytics implementation relies on leadership, cross-agency collaboration, skilled personnel, and organizational culture.

- AI-assisted evidence review offers faster synthesis, but human judgment remains essential for nuanced policy analysis.

Government agencies have long leaned on tradition, precedent, and gut instinct to guide major decisions. That era is fading fast. Data analytics is now reshaping how public sector leaders allocate budgets, predict demand, and deliver services that actually work. From reducing emergency response times by hours to recovering billions in tax revenue, the numbers speak clearly. This article walks you through the core analytics frameworks, real-world performance benchmarks, leadership best practices, and the frontier of AI-assisted policy review, so you can move from knowing analytics matters to making it matter in your agency.

Table of Contents

- Understanding the core types of analytics in government

- How analytics drives measurable improvement in government operations

- Integrating analytics into government workflows: Leadership, culture, and data governance

- AI-assisted review versus human-driven evidence synthesis in policy

- The real challenge: Moving from analytics adoption to impact

- Ready for actionable analytics? Connect with experts

- Frequently asked questions

Key Takeaways

| Point | Details |

|---|---|

| Four core analytics types | Descriptive, diagnostic, predictive, and prescriptive analytics each play a distinct role in government decision-making. |

| Proven operational impact | Analytics consistently reduce backlogs, enhance accuracy, and improve public service efficiency through measurable outcomes. |

| Leadership and culture matter most | Success depends more on buy-in, skilled talent, and ethical standards than technology alone. |

| AI enables faster evidence review | AI-assisted policy reviews save time and offer strong synthesis, but sometimes need more stylistic refinement. |

| Expert support maximizes outcomes | Partnering with analytics professionals unlocks tailored solutions and sustainable impact. |

Understanding the core types of analytics in government

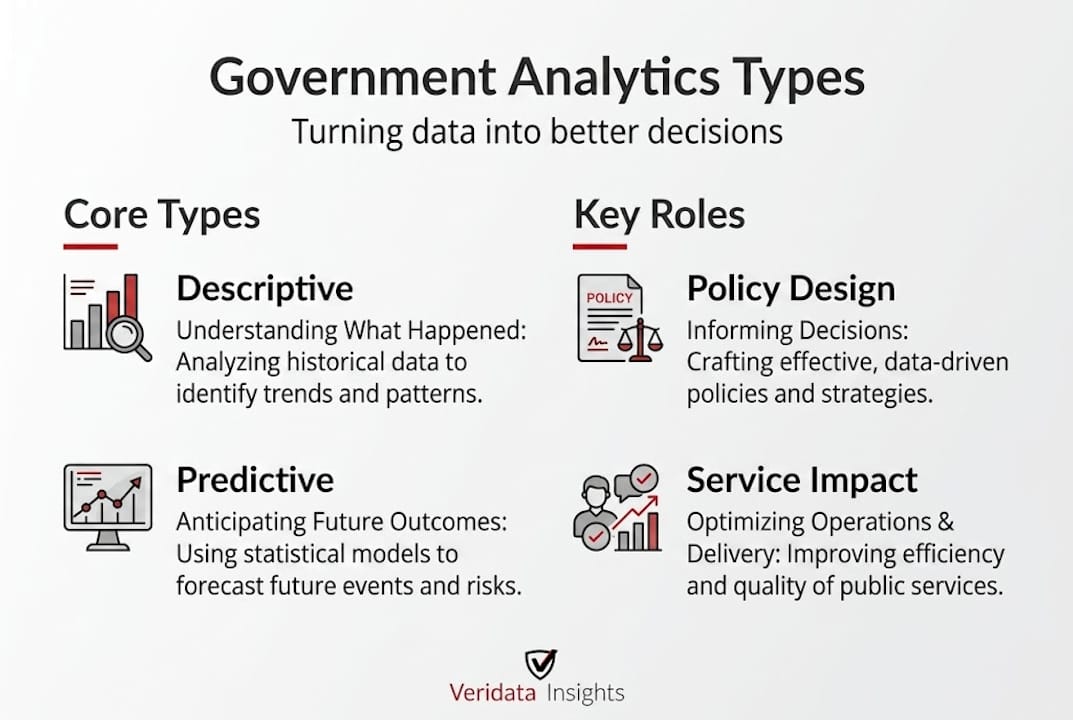

Before you can apply analytics effectively, you need to know what kind of analytics you’re actually working with. The field breaks into four distinct types, and each one plays a different role in the policy lifecycle. Confusing them, or skipping straight to the flashiest option, is one of the fastest ways to waste a budget and lose stakeholder trust.

Data analytics enables evidence-based policymaking by transforming raw data into actionable insights for policy formulation, implementation, and evaluation, using methodologies like descriptive, diagnostic, predictive, and prescriptive analytics. Think of these as rungs on a ladder. Each one builds on the last.

Here’s how they stack up:

| Analytics type | Core question | Government example |

|---|---|---|

| Descriptive | What happened? | Monthly unemployment rate reports |

| Diagnostic | Why did it happen? | Root cause of hospital readmission spikes |

| Predictive | What will happen? | Forecasting tax revenue for the next fiscal year |

| Prescriptive | What should we do? | Optimizing patrol routes to reduce crime |

Each type supports a distinct phase of the policy lifecycle. Here’s a step-by-step breakdown:

- Descriptive analytics gives leaders a clear baseline. You’re looking at historical data to understand where things stand. A city might use descriptive analytics to track monthly pothole repair completions or citizen complaint volumes by district.

- Diagnostic analytics investigates the “why” behind what the data shows. If school attendance drops in a specific neighborhood, diagnostic tools help you trace whether the cause is transportation gaps, health issues, or socioeconomic factors.

- Predictive analytics shifts focus to the future. Agencies use statistical models and machine learning to anticipate demand before it peaks. A social services department, for example, might predict which households are most likely to need emergency food assistance based on historical patterns and economic indicators.

- Prescriptive analytics goes one step further. It doesn’t just forecast. It recommends specific actions. A transportation department using prescriptive analytics might receive optimized road maintenance schedules that balance cost, safety risk, and traffic impact simultaneously.

Building data-informed government policies requires knowing which analytics type to apply at each stage. Using prescriptive analytics when you haven’t yet established a clean descriptive baseline is like trying to run before you can walk. Sequence matters. And the agencies that get this sequence right consistently see faster results and fewer costly course corrections down the road.

How analytics drives measurable improvement in government operations

Frameworks are useful. Numbers are convincing. Let’s talk about what analytics actually delivers when it’s applied well, because the benchmarks from real government deployments are striking.

Consider the results documented across multiple jurisdictions. The Illinois Department on Aging achieved an 18% reduction in eldercare backlogs, a 26% improvement in forecasting accuracy, and tightened budget variance from ±15% all the way down to ±4%. That’s not marginal improvement. That’s a structural shift in operational reliability.

The Illinois State Police saw response time drop from 14 hours to just 3 hours, alongside a 35% gain in patrol efficiency. Think about what that means for public safety outcomes and for officer morale. And at the national level, the Australian Taxation Office used analytics to identify and recover an additional AUD $4 billion in revenue that would otherwise have slipped through the cracks.

Here’s a quick summary of those gains:

| Agency | Metric improved | Before | After |

|---|---|---|---|

| Illinois Dept. on Aging | Eldercare backlog | Baseline | 18% reduction |

| Illinois Dept. on Aging | Forecasting accuracy | Baseline | +26% improvement |

| Illinois Dept. on Aging | Budget variance | ±15% | ±4% |

| Illinois State Police | Response time | 14 hours | 3 hours |

| Illinois State Police | Patrol efficiency | Baseline | +35% |

| Australian Taxation Office | Revenue recovered | Baseline | +AUD $4B |

The patterns across these cases point to a few consistent drivers of success:

- Clear problem definition before data collection begins

- Cross-department data sharing rather than siloed reporting

- Iterative testing of models before full deployment

- Leadership accountability tied directly to analytics outcomes

Thinking about healthcare contexts? The healthcare analytics ROI story looks equally compelling, with administrators using data to cut readmission rates and optimize staffing in ways that directly improve patient outcomes.

Pro Tip: Don’t wait for perfect data to start. Government agencies that delay analytics adoption until their data is “clean enough” often find that the process of analyzing imperfect data is exactly what reveals which data problems need fixing first. Start with what you have, document the gaps, and improve iteratively.

For EMS response time insights, predictive dispatch models are showing particular promise, cutting the time between call receipt and unit arrival in ways that directly translate to lives saved. Analytics is not an abstract management tool. In emergency services, it’s a matter of life and death.

It’s also worth connecting the dots between public sector analytics and broader communication strategies. Agencies that pair strong data practices with data-driven campaign impact tools are finding it easier to communicate policy success to citizens and stakeholders, which is its own form of ROI.

Integrating analytics into government workflows: Leadership, culture, and data governance

Getting results from analytics isn’t just a technology problem. That’s the truth most vendors won’t tell you upfront. The agencies that stumble most often aren’t the ones with bad data. They’re the ones with great data tools and weak organizational culture.

The Federal Data Strategy outlines the backbone of what sustainable analytics integration looks like: leadership buy-in at the executive level, integration of diverse data sources across departments, formal data governance frameworks, and ethical AI standards with built-in transparency and privacy protections. Standards like the UK’s GovS 010 offer useful models even for non-UK agencies, demonstrating how formal data governance documentation creates accountability at every level.

Here’s what effective integration actually requires:

- Executive sponsorship. When agency heads visibly champion analytics programs, adoption rates climb and budget fights shrink. Analytics without a senior champion tends to stall at the pilot stage.

- Cross-agency data integration. Siloed data is one of the biggest barriers in government. Effective programs break down departmental walls and create shared data lakes or federated architectures that allow different teams to access relevant information without compromising security.

- Skilled talent. Hiring data scientists is only part of the equation. You also need policy analysts who understand data, communications staff who can translate findings, and IT teams who can maintain infrastructure. Talent strategy has to be broader than just “hire a data team.”

- Ethical AI standards. Algorithmic decisions in public sector contexts carry real risks. Predictive policing models, benefits eligibility tools, and risk scoring systems can embed bias if they’re not carefully designed, audited, and monitored. Privacy protection and algorithmic transparency aren’t optional. They’re foundational.

- Cultural readiness. This is the big one. Analytics cultures require psychological safety for staff to question data, flag anomalies, and admit when models aren’t performing. Agencies that punish skepticism end up with analytics theater, fancy dashboards that no one actually trusts or uses.

“Prioritizing culture and skills over technology is the single most underrated factor in government analytics success.” This sentiment runs through virtually every high-performing public sector analytics program we’ve encountered.

Useful data visualization dashboards play a major role here. When data is accessible and visually clear, more staff engage with it. That engagement builds the kind of data literacy that makes an analytics culture self-sustaining over time.

Pro Tip: Before investing in new analytics tools, conduct an honest internal assessment of data literacy across your agency. If most staff can’t interpret a basic trend chart, the most sophisticated platform in the world won’t move the needle. Start with literacy, then layer on technology.

For agencies working through the early stages of analytics adoption, municipal strategy tips offer practical guidance on phasing implementation in ways that build momentum without overwhelming existing teams.

AI-assisted review versus human-driven evidence synthesis in policy

Here’s where things get genuinely interesting, and where government policy teams are facing a real fork in the road.

AI-assisted evidence review is not a future concept. It’s happening now, and the results from formal comparative studies are worth paying close attention to.

A UK government comparative study found that AI-assisted evidence review was completed 23% faster (90 hours versus 118 hours), excelled in synthesis tasks, but required more revisions for style. Critically, both AI-assisted and human-only reviews produced similar overall quality and identified similar causal mechanisms.

Let that sink in. Similar quality at 23% faster. That’s a meaningful efficiency gain for any policy team working under deadline pressure.

Here’s how the two approaches compare across key dimensions:

| Dimension | AI-assisted review | Human-driven review |

|---|---|---|

| Speed | Faster (90 hours avg.) | Slower (118 hours avg.) |

| Synthesis quality | Strong | Strong |

| Style consistency | Needs more revision | More consistent |

| Mechanism identification | Similar accuracy | Similar accuracy |

| Cost efficiency | Generally lower | Generally higher |

| Contextual nuance | Variable | Stronger |

What does this mean in practice for your policy team? A few clear takeaways:

- Use AI for speed, not replacement. The 23% time saving is real and significant. But it works best when human reviewers are in the loop for final editing, contextual interpretation, and political sensitivity checks.

- Plan for revision cycles. AI-assisted drafts consistently need more stylistic revision. Build that into your timelines, and you’ll avoid the frustration that comes from expecting a finished product from a first-pass AI output.

- Leverage AI strengths in synthesis. When you’re pulling together evidence across many sources, AI tools are genuinely strong. They process volume faster and can surface connections that human reviewers might miss under time pressure.

- Don’t skip human judgment on sensitive policy areas. Equity, community impact, and political feasibility all require human context that AI tools currently cannot replicate reliably.

“The question isn’t whether to use AI in evidence review. It’s knowing where AI adds speed without sacrificing the judgment that good policy demands.”

For teams interested in AI analytics in consulting contexts, the parallels to government policy work are strong. The hybrid human-plus-AI model is emerging as the gold standard, combining the efficiency of machine processing with the nuance of expert human review.

The real challenge: Moving from analytics adoption to impact

We’ve seen it more times than we’d like to admit. An agency invests in a platform, runs a pilot, sees promising results, and then… the momentum quietly dies. The dashboard gets ignored. The data team gets reassigned. The insights stop influencing decisions.

Technology alone does not create impact. Full stop. The agencies that sustain analytics success treat it as ongoing change management, not a one-time project. They invest continuously in talent, build accountability structures that require data-informed reporting, and maintain trusted partnerships with research and analytics experts who challenge assumptions and keep standards high.

The difference between analytics in strategic consulting and analytics theater is accountability. Who is responsible for acting on the findings? What happens when the data contradicts a preferred narrative? How does your agency handle those moments?

Building a reliable research methodology into your standard operating procedures is one of the most practical steps you can take. When methodology is documented and consistent, findings become defensible, reproducible, and trustworthy across leadership transitions.

Pro Tip: Schedule quarterly analytics reviews at the leadership level, not just among data teams. When executives regularly engage with findings and are expected to explain how they responded to the data, the entire organization takes analytics more seriously.

Ready for actionable analytics? Connect with experts

Government agencies face data challenges that are genuinely complex. The stakes are high, the data is often messy, and the political environment adds pressure that private sector analytics teams rarely encounter. That’s exactly where we come in. At Veridata Insights, we work with agencies and policy teams to design research methodologies, collect and process data, and translate findings into clear, actionable insights through reporting and data visualization. Whether you need a focused consultation or full-service research support, we’re available seven days a week with no project minimums. When you’re ready to move from questions to answers, connect with analytics experts at Veridata Insights and let’s build something useful together.

Frequently asked questions

What is evidence-based policymaking and how does analytics enable it?

Evidence-based policymaking uses data insights to guide policy formulation, implementation, and evaluation, powered by analytics methods including descriptive, predictive, and prescriptive approaches. Data analytics transforms raw data into actionable insights that replace assumption-driven decisions with measurable, reproducible ones.

How measurable are analytics-driven improvements in government?

Analytics improvements are highly measurable and often dramatic, with documented gains across response times, forecasting accuracy, and budget efficiency. The Illinois State Police cut response time from 14 to 3 hours while achieving a 35% patrol efficiency gain through predictive analytics tools.

What are key success factors for government analytics implementation?

The most important factors are leadership buy-in, skilled talent, ethical standards, and strong cross-agency integration, not just advanced technology. Federal Data Strategy practices consistently prioritize culture and governance frameworks over platform selection as the primary drivers of lasting success.

How does AI-assisted evidence review compare with traditional methods?

AI-assisted reviews complete work 23% faster and excel at synthesis tasks, though they require more stylistic revision than human-driven reviews. Both approaches deliver similar overall quality and identify similar mechanisms, making a hybrid model the most practical choice for most policy teams.