TL;DR:

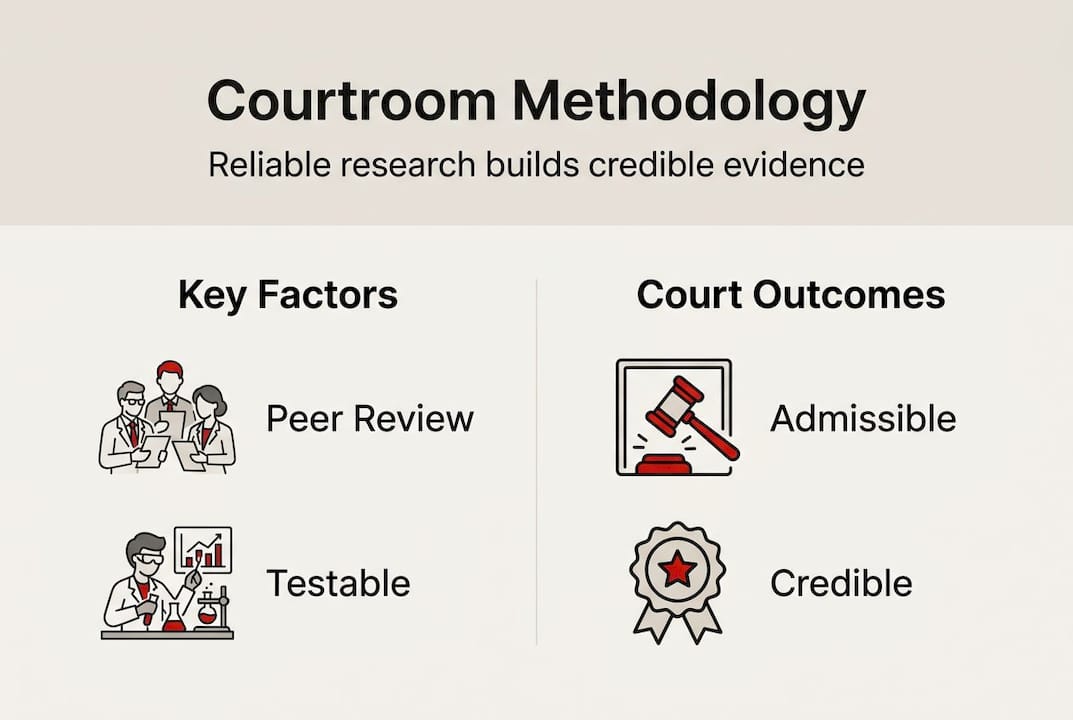

- Courts scrutinize expert methodology to prevent junk science influencing trials.

- Standards like Daubert, Frye, and Rule 702 guide admissibility based on research reliability.

- Strong, well-documented methodology enhances expert credibility and reduces risk of exclusion.

Expert testimony can make or break a case. But not all expert evidence is created equal, and courts know it. Poor methodology risks misleading juries with junk science, fueling frivolous lawsuits and unjust awards. That’s why judges don’t just wave experts through anymore. Standards like Daubert, Frye, and Rule 702 exist precisely to separate rigorous research from guesswork dressed up in a lab coat. If you’re a litigator or legal professional, understanding how research methodology is evaluated in court isn’t optional. It’s the difference between a credible case and a costly exclusion.

Table of Contents

- Research methodology as the foundation of expert evidence

- Key standards: Daubert, Frye, and Rule 702

- The impact of methodology: Case studies and empirical trends

- Building credible expert testimony: Strategies for legal professionals

- The uncomfortable truth: What most legal professionals miss about methodology in court

- Partnering with research experts for courtroom success

- Frequently asked questions

Key Takeaways

| Point | Details |

|---|---|

| Methodology determines admissibility | Expert evidence is only trusted if based on reliable, peer-reviewed methodology. |

| Court standards evolve | Daubert and Rule 702 have tightened admissibility to protect against junk science. |

| Legal teams must strategize | Litigators should proactively align evidence practices with latest courtroom standards. |

| Collaboration prevents mistakes | Working with professional research teams ensures compliance and strengthens expert credibility. |

Research methodology as the foundation of expert evidence

Every expert witness walks into court carrying more than credentials. They carry a methodology. And that methodology is what courts actually scrutinize.

The idea that a respected expert automatically equals reliable testimony is one of the most dangerous assumptions in litigation. Courts have seen enough “junk science” to know better. Under the Daubert standard, trial judges act as gatekeepers to ensure expert testimony is based on reliable research methodology, focusing on principles and methods rather than conclusions. That last part matters. A judge isn’t evaluating whether the expert’s opinion sounds right. They’re evaluating how the expert got there.

This gatekeeping role fundamentally changes how legal teams should approach expert evidence. It’s not enough to find someone with impressive credentials. You need someone whose process can withstand scrutiny.

Poor methodology creates several serious risks:

- Exclusion of key evidence at a critical moment in trial

- Jury confusion when flawed science goes unchallenged

- Appellate vulnerabilities tied to improperly admitted testimony

- Credibility damage that spills over to the entire case narrative

“The court’s role is not to determine the correctness of the expert’s conclusions, but to determine whether the reasoning or methodology underlying the testimony is scientifically valid.” This distinction is what separates a winning expert from an excluded one.

For litigators, this means investing in qualitative research strategies that are built from the ground up with admissibility in mind. It also means working with research partners who understand ethical research practices and can document every methodological decision clearly.

Methodology isn’t a back-office concern. It’s a front-line legal strategy.

Key standards: Daubert, Frye, and Rule 702

Three frameworks dominate how courts evaluate the reliability of expert evidence. Knowing the difference between them isn’t just academic. It directly shapes how you build and defend your case.

Daubert applies in federal courts and most states. It gives judges a multi-factor test to assess whether methodology is scientifically sound. The Daubert factors include testability, peer review and publication, known or potential error rate, existence of standards and controls, and general acceptance in the scientific community. No single factor is decisive, but together they paint a clear picture of methodological rigor.

Frye is older and simpler. It asks one question: is the methodology generally accepted within the relevant scientific community? Some states still use Frye, and it tends to be more conservative, favoring established techniques over novel approaches.

Rule 702 is the federal rule that codifies Daubert. Importantly, Rule 702 amendments in 2000 and 2023 emphasize a preponderance standard for reliability, directly addressing courts’ failures to properly gatekeep junk science. The 2023 update is significant. It clarifies that judges must affirmatively find that reliability requirements are met, not simply assume they are.

| Standard | Jurisdiction | Core test | Focus |

|---|---|---|---|

| Daubert | Federal + most states | Multi-factor reliability | Principles and methods |

| Frye | Select states | General acceptance | Scientific community consensus |

| Rule 702 | Federal | Preponderance of reliability | Judge as active gatekeeper |

Key practical takeaways for legal teams:

- Identify which standard applies in your jurisdiction before selecting an expert

- Ensure your expert’s methodology is documented and defensible under the applicable test

- The 2023 Rule 702 update raises the bar. Treat it as the new baseline.

- Consider collaboration in expert reporting to align legal strategy with research rigor from day one

These standards aren’t obstacles. They’re a roadmap. Use them to build stronger cases, not just to avoid exclusions.

The impact of methodology: Case studies and empirical trends

Theory is useful. Real-world consequences are clarifying.

Exclusion of expert testimony is still the exception, not the rule. But recent cases show heightened scrutiny and inconsistent application of standards across jurisdictions. Some courts apply Daubert aggressively. Others treat it more loosely. That inconsistency is itself a risk factor for litigators.

What does the data tell us?

| Scenario | Methodology quality | Outcome |

|---|---|---|

| Survey evidence in trademark cases | Rigorous, peer-reviewed design | Admitted; weighted heavily |

| Forensic bite-mark analysis | Contested, low error-rate data | Increasingly challenged post-2020 |

| Economic damages modeling | Transparent assumptions, documented | Admitted with minor challenges |

| Causation testimony in toxic tort | Speculative, no peer review | Excluded under Daubert |

One consistent finding: using robust, Daubert-compliant methodologies enhances expert credibility and admissibility, directly impacting case success. The research also notes something troubling. In areas like forensic evidence, cognitive biases can work against rigorous scrutiny. Familiarity breeds complacency, and courts sometimes defer to long-standing techniques even when the underlying methodology is weak.

Pro Tip: Don’t assume that because a methodology has been admitted before, it will sail through again. Post-2023 Rule 702 scrutiny is tighter. Build your expert’s foundation as if every factor will be challenged.

Here are the most common methodological pitfalls that lead to exclusion or weakened testimony:

- Failing to document the research process in enough detail for court review

- Relying on samples that are too small or unrepresentative of the relevant population

- Skipping peer review or using unpublished, untested methods

- Ignoring known error rates or failing to disclose them transparently

- Allowing confirmation bias to shape data collection or analysis

Avoiding common market research pitfalls and investing in expert survey programming are practical steps that directly reduce these risks.

Building credible expert testimony: Strategies for legal professionals

Knowing the standards is step one. Building testimony that meets them is where the real work happens.

The foundation of credible expert testimony is a methodology that can be explained clearly, defended confidently, and documented thoroughly. Judges as gatekeepers focus on principles and methods, which means your expert needs to articulate not just what they found, but exactly how they found it.

Pro Tip: Ask your expert to walk you through their methodology as if explaining it to a skeptical judge. If they struggle to do that clearly, opposing counsel will make it painful in court.

Here are the core strategies we recommend for legal teams building expert testimony:

- Start with the standard. Know whether Daubert, Frye, or Rule 702 applies. Design the research methodology to satisfy that standard from the beginning, not as an afterthought.

- Document everything. Every decision in the research process should be recorded. Sample selection, data collection methods, analysis approach, and any deviations from the original plan.

- Prioritize peer-reviewed methods. Where possible, use methodologies that have been published, tested, and accepted by the relevant scientific community.

- Disclose error rates honestly. Trying to hide uncertainty backfires. Courts respect transparency. Experts who acknowledge limitations while explaining controls are more credible, not less.

- Align research and legal teams early. The biggest methodological failures happen when researchers and lawyers work in silos. Aligning research deliverables with your legal timeline prevents last-minute surprises.

- Invest in clear reporting. Judges and juries aren’t scientists. Client-friendly research presentations that communicate findings plainly without sacrificing rigor are a genuine competitive advantage.

- Consider formal training. Resources like a science writing workshop can sharpen how your team communicates complex methodology in accessible, persuasive language.

The goal isn’t just admissibility. It’s persuasiveness. A methodology that survives a Daubert challenge but confuses the jury hasn’t fully done its job.

The uncomfortable truth: What most legal professionals miss about methodology in court

Here’s what we see too often: legal teams treat methodology as a compliance checkbox rather than a strategic asset. They find a credentialed expert, confirm the methodology sounds reasonable, and move on. That approach worked better in a pre-2023 world.

The uncomfortable reality is that expertise alone doesn’t protect you. Exclusion rates are rising, application is inconsistent, and cognitive biases in courtrooms can cut both ways. Familiarity with a technique can shield weak methodology from scrutiny, while genuinely rigorous novel methods sometimes face unfair skepticism.

Our take? Stop letting court standards set your floor. Use them as your baseline, then build higher. The legal professionals who win consistently aren’t just avoiding exclusion. They’re presenting methodology so clean and well-documented that opposing counsel has nothing to grab onto. That’s not luck. That’s deliberate research strategy.

Methodological complacency is a liability. Treat rigor as a feature, not a formality.

Partnering with research experts for courtroom success

Building Daubert-compliant, court-ready research is a team effort. You bring the legal strategy. We bring the methodological precision. At Veridata Insights, we work with legal professionals to design and execute research that holds up under the toughest scrutiny, whether that means survey programming, qualitative research design, data collection, or expert reporting support. No project minimums. Seven days a week. We do as much or as little as your case requires. Strong cases are built on data you can trust. If you’re ready to strengthen your expert evidence from the ground up, reach out to our team and let’s talk about what your case needs.

Frequently asked questions

What makes a research methodology Daubert-compliant?

A Daubert-compliant methodology is testable, peer reviewed, has a known error rate, uses standards and controls, and is generally accepted by the relevant scientific community. All five factors are considered together, not in isolation.

How do Rule 702 amendments affect admissibility of expert testimony?

The 2023 Rule 702 amendments require judges to affirmatively find that reliability requirements are met under a preponderance standard, raising the bar for admissibility and reducing the chance that junk science slips through.

Why is poor methodology so dangerous in court?

Poor methodology misleads juries, invites frivolous claims, and produces unjust verdicts. It also exposes the entire case to appellate challenges that could have been avoided with stronger research design.

How often are expert testimonies excluded based on methodology?

Exclusion remains the exception rather than the rule, but recent case trends show heightened scrutiny and inconsistent application across jurisdictions, making methodological rigor more important than ever.

Recommended

- Qualitative Research in Legal Cases: Strategy That Wins – Veridata Insights

- Ethical Research Practices: Building Trust in Consulting Partnerships – Veridata Insights

- Which Company Is Best for Qualitative Research? – Veridata Insights

- Data Quality Measures in Online Surveys: Forensic Markers and Verification Methods – Veridata Insights