Qualitative data offers rich insights into human behavior, motivations, and experiences, but many researchers struggle to transform raw interview transcripts, focus group notes, and observations into actionable findings. Without a structured workflow, you risk missing critical patterns, introducing bias, or drowning in unorganized information. This guide walks you through a proven step-by-step process for analyzing qualitative data efficiently, from preparation and coding to verification and integration with quantitative metrics, ensuring your research delivers reliable, meaningful results.

Table of Contents

- Key takeaways

- Preparing for qualitative data analysis

- Executing the qualitative data analysis workflow

- Verifying and integrating qualitative data results

- Enhance your qualitative data analysis with expert support

- Frequently asked questions

Key Takeaways

| Point | Details |

|---|---|

| Structured workflow | A clear, stepwise workflow helps transform raw qualitative data into actionable insights and reduces the risk of missing patterns. |

| Preparation and tools | Solid preparation and careful tool selection lay the groundwork for accurate coding and efficient analysis. |

| Avoiding pitfalls | Following expert tips helps prevent biases, disorganization, and backtracking during analysis. |

| Verification and integration | Verifying findings and integrating qualitative results with quantitative measures increases reliability and actionability. |

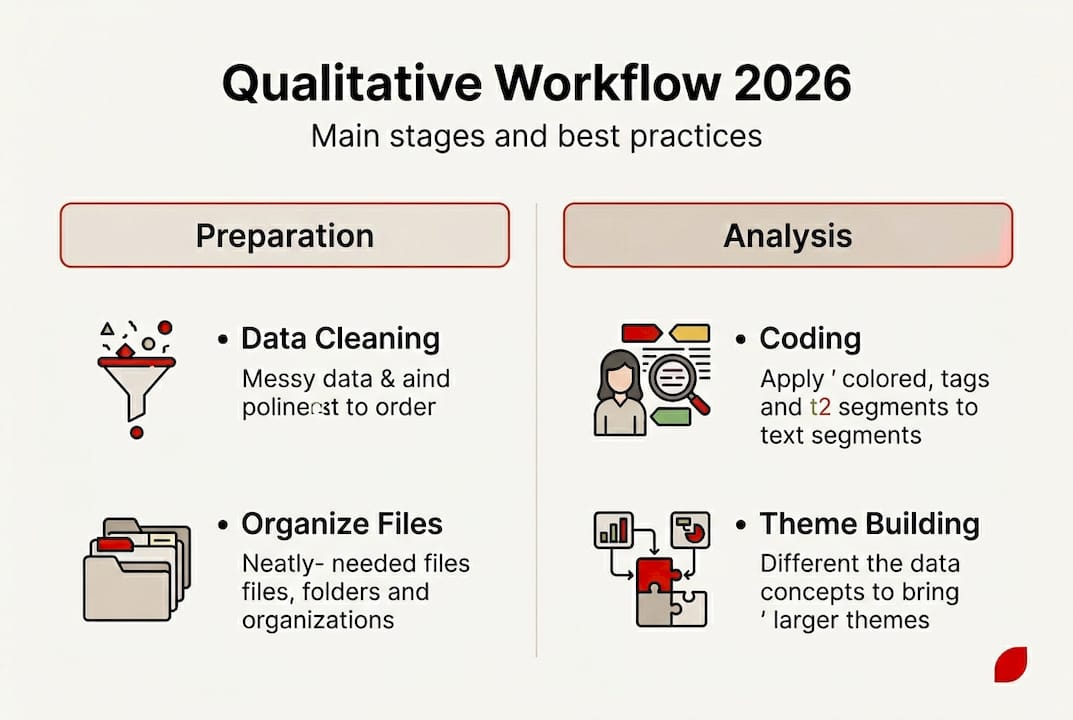

Preparing for qualitative data analysis

Before diving into coding and interpretation, you need a solid foundation. Rushed preparation leads to disorganized data, missed insights, and wasted time backtracking. Start by reviewing your raw qualitative datasets for completeness and clarity. Check interview transcripts for accuracy, ensure focus group recordings are audible, and verify that observational notes contain sufficient context. Clean your data by removing duplicates, correcting obvious errors, and standardizing formats across sources.

Organize raw data systematically so you can access and reference it easily throughout analysis. Create a logical folder structure with clear naming conventions that indicate data type, date, participant ID, and other relevant identifiers. For example, use formats like “Interview_P023_2026-01-15” rather than vague labels. This organization becomes critical when you need to return to source material during coding or verification.

Choose digital tools and software optimized for qualitative coding and analysis. Popular options include NVivo, MAXQDA, Atlas.ti, and Dedoose, each offering features like automated coding suggestions, visualization tools, and collaboration capabilities. Preparing by organizing data and selecting suitable software enhances analysis efficiency, allowing you to focus on interpretation rather than administrative tasks. Evaluate tools based on your project size, budget, team collaboration needs, and technical comfort level.

Understand the types of qualitative data you’re working with, as each requires slightly different handling:

- Interview transcripts demand attention to individual narratives and direct quotes

- Focus group discussions require tracking group dynamics and interaction patterns

- Observational field notes need careful contextualization of behaviors and settings

- Document analysis involves identifying themes across written materials

- Visual data like photos or videos require descriptive coding of content and context

Set clear analysis objectives aligned with your research questions before you begin coding. Ask yourself what specific questions you need to answer, what stakeholders expect from the findings, and how results will inform decisions. Document these objectives in a brief analysis plan that guides your coding framework and helps you stay focused when navigating large datasets. Consider combining qualitative and quantitative research approaches from the start if your objectives require both depth and breadth.

| Preparation Task | Purpose | Common Tool |

|---|---|---|

| Data cleaning | Remove errors and standardize formats | Excel, Google Sheets |

| Systematic organization | Enable easy access and reference | Cloud storage, project folders |

| Software selection | Streamline coding and analysis | NVivo, MAXQDA, Atlas.ti |

| Objective setting | Align analysis with research goals | Analysis plan document |

Pro Tip: Create a codebook template before you start coding. Include columns for code name, definition, example quotes, and inclusion/exclusion criteria. This upfront investment saves hours of confusion later and ensures consistency if multiple researchers are coding.

Executing the qualitative data analysis workflow

Once preparation is complete, you’re ready to systematically extract meaning from your data. The execution phase transforms raw text into structured insights through iterative coding and pattern identification. This process requires both analytical rigor and creative interpretation.

Start with open coding to label concepts, ideas, and phenomena in your data. Read through transcripts or notes line by line, assigning descriptive codes to segments that capture distinct ideas. Keep codes close to the data at this stage rather than imposing preconceived categories. For example, if a participant mentions “feeling overwhelmed by too many survey questions,” you might code this as “survey fatigue” or “respondent burden.” Generate as many codes as needed to capture the richness of your data, typically resulting in 50 to 150 initial codes for a moderate-sized project.

Use axial coding to relate codes into broader categories and identify connections between concepts. Review your initial codes and look for patterns, groupings, and relationships. Codes like “survey fatigue,” “lengthy questionnaires,” and “abandonment” might cluster into a category called “barriers to completion.” This phase reduces the number of codes while building a hierarchical structure that reveals how concepts relate to each other. Axial coding moves you from description toward explanation.

Identify overarching themes and patterns that answer your research questions. Themes are broader than categories and represent the essential insights emerging from your analysis. A theme like “design choices impact data quality” might encompass multiple categories including barriers to completion, question clarity issues, and respondent engagement factors. A structured coding and thematic analysis process leads to deeper insights by forcing you to synthesize findings rather than simply reporting what participants said.

The coding workflow typically follows this sequence:

- Read through all data once without coding to get familiar with content and range

- Conduct first-pass open coding on a subset of data to develop initial code list

- Refine codebook definitions and apply codes systematically across full dataset

- Use axial coding to group related codes into meaningful categories

- Identify themes by analyzing patterns across categories and relating them to research questions

- Review and validate themes against original data to ensure they’re grounded in evidence

Use memo writing to document emerging insights, questions, and interpretations throughout the process. Memos are analytical notes you write to yourself about what you’re noticing in the data. They might explore why certain codes appear together, question assumptions, or sketch preliminary explanations for patterns. These memos become invaluable when writing your final report because they capture your thinking process and help you articulate findings clearly.

Apply qualitative software features to streamline coding and enhance analysis. Most platforms allow you to auto-code based on keywords, visualize code relationships through network diagrams, query co-occurrence patterns, and generate code frequency reports. However, technology should support rather than replace analytical thinking. Software can’t interpret meaning or judge what’s significant, so maintain active engagement with your data rather than relying solely on automated features.

Pro Tip: Code a sample of data with a colleague and compare results to check for consistency. Discuss differences in how you applied codes and refine definitions until you achieve reasonable agreement. This intercoder reliability check strengthens the credibility of your analysis, especially for best qualitative research firms working on high-stakes projects.

Verifying and integrating qualitative data results

Producing themes is only valuable if those themes accurately represent your data and withstand scrutiny. Verification ensures your findings are reliable, valid, and actionable rather than reflecting researcher bias or selective interpretation. This phase separates rigorous analysis from anecdotal reporting.

Use triangulation to cross-verify findings from multiple data sources or methods. If interviews, focus groups, and observational data all point to the same theme, you can be more confident in that finding. Triangulation also involves comparing your interpretations with existing literature or theoretical frameworks to see if they align with or challenge established knowledge. When different data types converge on similar conclusions, your findings gain credibility.

Peer debriefing and member checking validate interpretations and reduce individual bias. Peer debriefing involves presenting your analysis to colleagues who can question your reasoning, point out alternative explanations, and challenge assumptions. Member checking returns findings to participants themselves to confirm whether your interpretations resonate with their experiences. While participants may not agree with every analytical conclusion, their feedback helps ensure you haven’t misrepresented their perspectives.

Compare qualitative themes with quantitative metrics for a comprehensive perspective. Numbers tell you what and how much, while qualitative data explains why and how. For instance, survey data might show 67% of customers rate service as poor, while qualitative interviews reveal that long wait times and impersonal interactions drive dissatisfaction. Combining qualitative and quantitative data leads to more robust client solutions by providing both statistical significance and contextual understanding.

| Verification Method | Purpose | Application |

|---|---|---|

| Triangulation | Cross-check findings across sources | Compare interviews, observations, documents |

| Peer debriefing | Challenge interpretations and assumptions | Present analysis to research colleagues |

| Member checking | Validate accuracy of participant perspectives | Share findings with interview subjects |

| Negative case analysis | Test themes against contradictory evidence | Actively seek data that doesn’t fit patterns |

| Audit trail | Document analytical decisions for transparency | Maintain memos, codebook versions, decision logs |

Prepare clear, actionable reports to communicate findings effectively. Structure reports around themes rather than simply listing what participants said. Include representative quotes that illustrate each theme, but use them as evidence rather than letting them stand alone. Connect findings explicitly to research questions and business objectives. Visualizations like thematic maps, concept diagrams, and quote matrices help stakeholders grasp complex patterns quickly.

Understand limitations and acknowledge potential biases in your analysis. Qualitative research doesn’t claim statistical generalizability, so be clear about the scope and boundaries of your findings. Reflect on how your own background, assumptions, and research design choices may have influenced what you noticed or emphasized. Transparency about limitations actually strengthens credibility by demonstrating methodological awareness. Consider whether qualitative vs quantitative methods used together would address gaps in your current approach.

Key practices for maintaining rigor throughout verification:

- Document all analytical decisions in memos or decision logs

- Actively search for negative cases that contradict emerging themes

- Maintain an audit trail showing how you moved from raw data to conclusions

- Use multiple analysts when possible to compare interpretations

- Ground every claim in specific data examples rather than generalizations

Enhance your qualitative data analysis with expert support

Navigating the complexities of qualitative data analysis becomes easier when you have experienced partners who understand both methodology and your specific research objectives. Veridata Insights offers tailored qualitative research services designed to streamline your analysis workflow, from initial study design through final reporting. Our team brings advanced analytical tools, proven coding frameworks, and deep expertise in transforming raw qualitative data into actionable insights that drive decisions.

Whether you need support with a single phase like coding and thematic analysis or comprehensive end-to-end research services, we provide flexible solutions with no project minimums. Benefit from expert methodologies that combine qualitative depth with quantitative rigor, ensuring your findings are both meaningful and measurable. Our analysts specialize in partnering with a qualitative research firm relationships that enhance your internal capabilities rather than replacing them.

Explore our qualitative market research services to discover how we help market researchers, analysts, and academics achieve deeper insights through structured workflows and best practices. Ready to elevate your qualitative data analysis? Contact Veridata Insights today to discuss how we can support your next research project.

Frequently asked questions

What is a qualitative data analysis workflow?

A qualitative data analysis workflow is a systematic, step-by-step process that guides researchers from raw data collection through verified insights. It typically includes preparation and organization, iterative coding to identify concepts, thematic analysis to reveal patterns, and verification to ensure findings are reliable and actionable. Following a structured workflow prevents common pitfalls like data overwhelm, inconsistent coding, and biased interpretation.

Why are coding techniques important in qualitative analysis?

Coding techniques transform unstructured text into organized, analyzable categories that reveal patterns and meanings hidden in raw data. Open coding captures initial concepts close to the data, while axial coding builds relationships between codes to develop explanatory frameworks. Without systematic coding, analysis becomes subjective storytelling rather than rigorous research. Quality coding also enables collaboration, as multiple researchers can apply consistent definitions.

How can qualitative and quantitative data be integrated effectively?

Integration works best when qualitative findings explain patterns identified in quantitative data, or when numbers validate themes emerging from qualitative analysis. Use qualitative interviews to explore why survey respondents answered certain ways, or compare thematic prevalence against statistical frequencies. Sequential designs where one method informs the other, or concurrent designs analyzed together, both produce richer insights than either approach alone.

What are common challenges in qualitative data analysis?

Researchers frequently struggle with managing large volumes of unstructured data, maintaining coding consistency across team members, avoiding confirmation bias, and determining when analysis is complete. Time constraints often pressure analysts to rush through coding or skip verification steps. Selecting appropriate software and learning its features also creates barriers. Addressing these challenges requires disciplined workflows, clear codebooks, regular team calibration, and realistic project timelines.

When should I consider external support for qualitative analysis?

Consider partnering with experienced qualitative research firms when you lack in-house expertise, face tight deadlines, need specialized methodologies, or want independent validation of findings. External support proves valuable for high-stakes projects where credibility matters, complex multi-method studies, or when your team needs training in advanced analytical techniques. The right partner enhances rather than replaces your capabilities, providing flexible support tailored to your specific needs and budget.

Recommended

- Data analysis step by step for market researchers in 2026 – Veridata Insights

- Video: For All Your Qualitative & Quantitative Research Needs – Veridata Insights

- Partnering with Veridata Insights’ Qualitative Research Division – Veridata Insights

- What the Veridata Insights Qualitative Research Division brings to the table – Veridata Insights