TL;DR:

- Survey methodology’s integrity is crucial because courts prioritize how data is collected over the results themselves. Proper survey design, including clear universe definition and unbiased questions, ensures admissibility and legal credibility. Expert support enhances defensibility, especially when managing niche populations and complex evidentiary requirements.

Surveys are powerful legal tools, but here’s what many attorneys get wrong: the outcome matters far less than how you got there. A survey with impressive numbers can still be thrown out of court if the methodology doesn’t hold up to scrutiny. Admissibility is not just about what your data shows. It’s about how your questions were written, who answered them, and whether the design would pass the Daubert test for reliability and relevance. Get the methodology right, and your survey becomes a cornerstone of your case. Get it wrong, and it becomes ammunition for opposing counsel.

Table of Contents

- Why survey methodology is critical in legal cases

- Key elements of effective survey design for attorneys

- Common pitfalls and how to avoid them

- Case-based examples: Applying survey methodology in law

- Advanced considerations: Niche universes, online tools, and rebuttals

- The uncomfortable truth most attorneys miss about surveys

- Level up your legal surveys with expert support

- Frequently asked questions

Key Takeaways

| Point | Details |

|---|---|

| Survey reliability matters | Courts scrutinize survey methods, not just results, affecting admissibility and case outcomes. |

| Sampling must fit the universe | Define your survey population precisely and match sample size to the legal context and goals. |

| Quality controls are essential | Implement unbiased questions, control groups, and screening measures for credible, defensible evidence. |

| Tailor for special cases | Niche or small populations require adapted methodologies and deeper documentation. |

| Expert input elevates success | Strategic survey design and expert validation make legal surveys more persuasive in court. |

Why survey methodology is critical in legal cases

Survey evidence shows up across a wide range of litigation types. Trademark infringement. Antitrust. Employment disputes. Damages calculations. In each of these contexts, attorneys rely on surveys to establish facts that are difficult to prove through documents or testimony alone. A confusion survey in a trademark case, for instance, can demonstrate whether consumers actually mistake one brand for another. That’s compelling evidence, but only if the survey was designed correctly.

The problem is that courts do not simply accept surveys because they contain data. Daubert standards govern survey admissibility, emphasizing reliability and relevance. Flaws like poor question design, lack of controls, and non-representative samples lead to exclusion or reduced weight rather than statistical issues. In other words, a technically accurate dataset built on a biased survey is still a weak survey.

“A survey’s value in court is inseparable from the rigor of the process that produced it. Judges evaluate methodology first, findings second.”

We’ve seen cases where surveys were excluded not because the numbers were wrong, but because the questions led respondents, the sample didn’t represent the right population, or there was no control group. Courts have become sharper at spotting these gaps. Reliable research methodology in court is not optional. It is the foundation.

When surveys are most impactful for attorneys:

- Trademark confusion and dilution cases

- False advertising claims where consumer perception is disputed

- Antitrust matters requiring market definition or competitive impact data

- Employment class actions needing representative workforce data

- Damages assessments requiring consumer willingness-to-pay data

- Expert witness support where primary data is necessary

Key elements of effective survey design for attorneys

Understanding why methodology matters is step one. Actually building a sound survey is step two, and it’s where a lot of attorneys and their consultants stumble. Survey methodology for litigation requires defining the proper universe of actual purchasers or decision-makers, using representative sampling with statistically sufficient respondents (roughly 400 for general products, smaller for niche audiences), unbiased and neutral question wording, realistic marketplace stimuli, control groups to calculate net confusion, and data integrity measures like screening speeders.

Let’s break each of these down.

Define the universe. The “universe” refers to the population of people whose perceptions are legally relevant to your case. In a trademark matter, that’s typically the actual buyers or potential buyers of the product. Get this wrong and your entire sample is invalid. If you survey the general public when only specialty contractors purchase the product, the data won’t hold up.

Sampling strategy. Effective sampling methods are essential for legal credibility. A sample needs to be both sufficiently large and genuinely representative. About 400 respondents is the general benchmark for mass-market products. Niche markets can justify smaller samples, but you must document the rationale clearly.

Question wording. Neutral, clear questions are non-negotiable. A leading question like “Would you say this product is similar to the one you just saw?” introduces bias. Better: “How similar or different do you find these two products?” Qualitative research in legal cases can also inform how real consumers talk about products, which helps you write question language that mirrors authentic thinking.

Control groups. This is where many surveys fall flat. A control group sees a stimulus that has been altered to remove the allegedly infringing element. By comparing the confusion rate among the exposed group against the control group, you isolate the actual effect of the contested element. Without this, opposing counsel will argue that your confusion rate reflects background noise, not infringement.

Data integrity. Respondents who speed through a 15-minute survey in under 90 seconds are not giving thoughtful answers. Screening speeders, eliminating duplicate IP addresses, and building attention checks into the questionnaire are all critical quality controls. B2B survey design tips apply here too, especially when your universe involves business decision-makers.

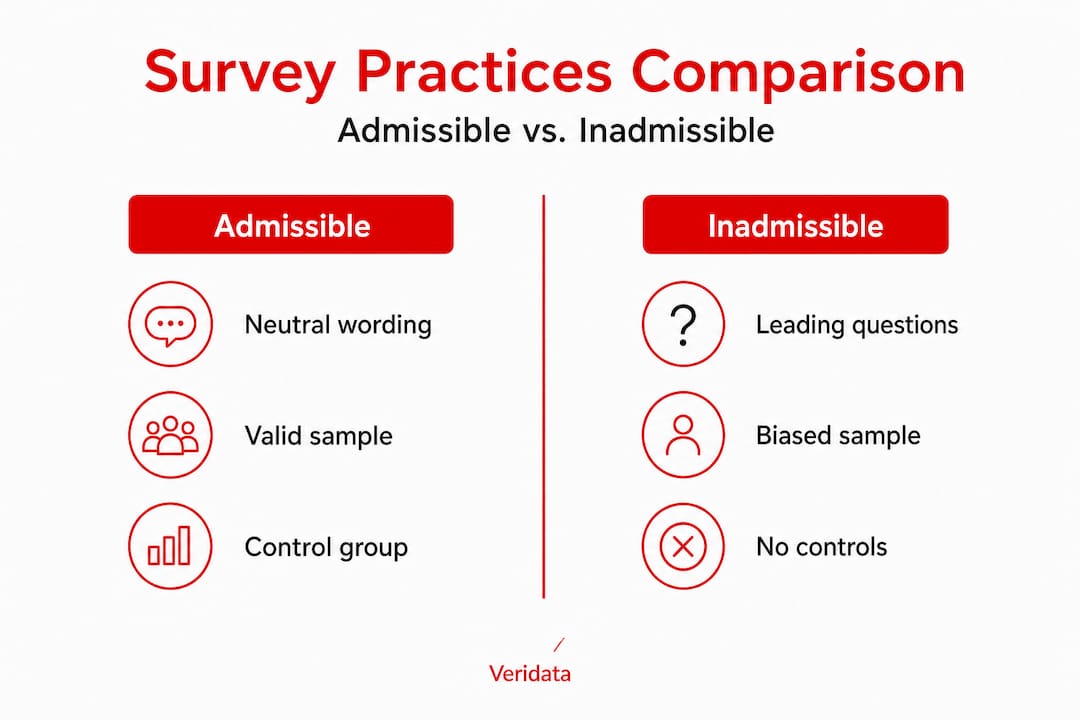

Comparison: Admissible vs. inadmissible survey practices

| Practice | Admissible | Inadmissible |

|---|---|---|

| Universe definition | Actual purchasers or potential buyers | General population when irrelevant |

| Sample size | ~400 or statistically justified | Convenience sample, no rationale |

| Question wording | Neutral and balanced | Leading, biased, or double-barreled |

| Control group | Present and documented | Absent or poorly designed |

| Stimuli replication | Mirrors marketplace conditions | Abstract or artificial presentation |

| Data quality | Speeders screened, attention checks used | No quality controls |

Steps for designing a defensible legal survey:

- Define the relevant universe based on the legal issue at hand

- Determine sample size with documented statistical rationale

- Draft questions using neutral, marketplace-reflective language

- Build a control group with a modified stimulus

- Program attention checks and speeder screens into the survey tool

- Pilot the survey with a small test group to catch errors

- Launch to a representative, recruited sample

- Document every design decision with written rationale

- Analyze data with appropriate statistical methods

- Prepare a methodology report that survives deposition

Pro Tip: Test your questions with impartial peers before launching. Specifically, non-lawyers who match your target population. If they interpret the questions differently than you intended, a judge might too.

Common pitfalls and how to avoid them

Even experienced attorneys who understand the principles make mistakes in execution. Flaws like poor question design, lack of controls, and non-representative samples are the most common grounds for exclusion or reduced evidentiary weight.

Here’s what we see happen most often:

- Wrong universe: Surveying the general public instead of actual or prospective buyers

- Biased question order: Priming respondents early in the survey to answer a key question a certain way

- Missing controls: Submitting confusion data without a control condition to establish baseline rates

- Skipping pilot testing: Launching a survey without catching ambiguous or leading questions first

- No documentation: Failing to record why design decisions were made, leaving the methodology open to attack

- Over-relying on open-ended questions: Without coding protocols, these answers are hard to quantify and easy to challenge

Attention checks are simple questions embedded in the survey that a genuine respondent would answer correctly. A question like “Please select ‘Strongly agree’ for this item” catches autopilot respondents. Survey data quality measures go even further, including device fingerprinting, IP deduplication, and response consistency scoring.

For niche universes, standard recruitment panels may not cut it. If your case involves specialty physicians, senior procurement executives, or a narrow geographic market, you need a recruiter who knows how to reach that audience reliably. Fraud prevention in surveys is just as relevant for legal work as it is for commercial research.

Pro Tip: Always pair survey data with thorough documentation. Every question, every design choice, every screening criterion should be captured in writing. When opposing counsel deposes your survey expert, that paper trail is your best defense.

Case-based examples: Applying survey methodology in law

Theory is useful. Real-world examples are better. Here are three distinct contexts where survey methodology directly affects legal outcomes.

Example 1: Fee surveys for prevailing rate determinations. NALFA’s prevailing rate surveys follow a structured process: survey design and questionnaire development with client input, identification of the targeted population, email campaign launch, participation monitoring, and data sorted into charts and graphs with narrative methodology, along with privacy protection. This is a model of methodological discipline. When fee surveys follow this structure, the data is far more defensible in fee dispute litigation.

Example 2: Benchmarking surveys for firm rankings. Benchmark litigation rankings use practitioner surveys of lawyers and in-house counsel for recommendations on firms and attorneys. These surveys are designed to capture perceptions within a professional universe, not the general public. The methodology is adapted to the audience, the question format, and the purpose. That’s a lesson for any legal survey: design must serve the specific objective.

Example 3: Lawyer satisfaction surveys for workforce research. The Law360 Pulse 2025 survey was conducted online from January through March 2025, with 1,184 U.S. attorneys, 38% of whom were associates, measuring satisfaction with job, hours, and advancement. Only 61% reported satisfaction overall, a record low. This kind of segmentation by role, seniority, and practice area is exactly what makes satisfaction data actionable for HR strategy and workforce planning in law firms.

“The most useful legal surveys don’t just collect data. They are designed around the question the court or client actually needs answered.”

Legal survey methodology: Example frameworks

| Survey objective | Population | Method | Sample size | Analysis format |

|---|---|---|---|---|

| Trademark confusion | Actual purchasers | Online panel with screening | ~400 | Net confusion rate with control |

| Prevailing fee rate | Practicing attorneys in market | Email to targeted list | 200+ | Median rates by practice area |

| Firm benchmarking | Lawyers and in-house counsel | Practitioner referral survey | Variable | Tiered ranking with narrative |

| Workforce satisfaction | Attorneys at target firm | Anonymous online survey | Full staff | Cross-tabulation by seniority |

Workflow for applying methodology to a new legal matter:

- Clarify the legal question the survey must answer

- Identify who the legally relevant respondents are

- Choose a recruitment method suited to that population

- Build and test the questionnaire with controls and quality screens

- Launch, monitor, and close data collection

- Analyze with appropriate segmentation and control comparison

- Prepare a methodology report and expert witness materials

For hard-to-reach professional audiences, in-depth legal interview recruiting and sample recruitment strategies are areas where a specialized research partner adds real value.

Advanced considerations: Niche universes, online tools, and rebuttals

Legal survey work does not always follow a standard template. Edge cases present real challenges: small or niche universes allow for smaller samples (as few as 35 physicians in a specialized medical case), internet surveys are now the standard over mall intercepts, DIY surveys are possible if well-designed but face skepticism from courts, and rebuttal surveys may introduce new data but carry timing risks that can lead to exclusion.

Internet-based surveys have largely replaced in-person mall intercepts for litigation purposes. They are faster, more scalable, and easier to document. But the methodology must be just as rigorous. A poorly designed online survey is no better than a poorly designed paper one.

DIY survey tools are tempting because they are fast and cheap. But courts and opposing counsel will scrutinize every element of your design. Without documented expert involvement, DIY surveys often lack the methodological credibility needed to survive challenge. Comprehensive legal survey services bring the structure and defensibility that DIY efforts typically miss.

Checklist for assessing complexity and red flags:

- Is the universe small, specialized, or geographically limited?

- Are there multiple competing definitions of the relevant population?

- Is timing tight and a rebuttal survey possible from opposing counsel?

- Does the subject matter require technical literacy from respondents?

- Is the evidentiary weight of the survey central to the case?

- Will the survey expert face deposition or cross-examination?

Pro Tip: Anticipate challenges from opposing counsel before you finalize your design. Document every decision, from universe definition to question order, and have a ready rationale for each choice. The best survey experts can explain not just what they did, but exactly why.

The uncomfortable truth most attorneys miss about surveys

Here is something we’ve learned from years of working on legally defensible research: technical perfection alone does not win cases. A survey can check every methodological box and still fail to move a judge or jury if it doesn’t tell a coherent story.

Courts respond to credibility. Credibility comes from design choices that mirror real life, expert witnesses who can explain methodology in plain language, and data that connects clearly to the legal claim. A qualitative strategy for legal cases can actually strengthen a quantitative survey by providing the narrative context that makes numbers meaningful.

The attorneys who get the most out of survey evidence treat it as one prong of a broader advocacy strategy, not a standalone silver bullet. They pair surveys with interviews, market observations, and expert testimony. They pilot their survey instruments with non-lawyer audiences to catch assumptions that legal minds overlook. They bring in neutral, credentialed researchers rather than internal staff who might appear biased.

We also think the obsession with sample size misses the point. Courts have accepted surveys with 35 respondents when the universe was genuinely that small and the methodology was airtight. A sample of 1,000 with biased questions is weaker than a sample of 150 with a clean design and documented rationale. Quality over quantity. Every time.

Level up your legal surveys with expert support

If you’ve made it this far, you know that building a credible legal survey takes more than sending out a link and collecting responses. It takes methodological precision, careful recruitment, and documentation that holds up under adversarial scrutiny.

At Veridata Insights, we work with attorneys at every stage: universe definition, questionnaire design, sampling, data collection, quality control, analysis, and reporting. No project minimums. Seven days a week, 365 days a year. Whether you need a full-service engagement or just need us to review your questionnaire before launch, we are ready to help. We specialize in recruiting hard-to-reach audiences including legal professionals, business decision-makers, and healthcare populations. When your survey needs to stand up in court, we make sure the methodology is the last thing anyone can challenge.

Frequently asked questions

What is the Daubert standard for survey admissibility?

The Daubert standard examines a survey’s reliability and relevance, with flaws in design or sampling leading to exclusion or lower evidentiary weight in court. It’s not enough to have numbers; the process behind them must be sound.

How many respondents are needed for a legal survey to be credible?

About 400 respondents is the general standard for mass-market products, but niche cases may justify smaller samples if the rationale is clearly documented and the universe is genuinely limited.

Why are control groups important in litigation surveys?

Control groups calculate net confusion by comparing exposed and unexposed respondents, isolating the effect of the contested element from general background noise and strengthening the survey’s overall reliability.

Can attorneys conduct DIY legal surveys?

DIY surveys face skepticism from courts even when carefully designed, making expert involvement, thorough documentation, and neutral oversight critical for any survey intended for litigation use.

What’s the difference between benchmarking and satisfaction surveys in law?

Benchmarking surveys rank firms and attorneys based on practitioner recommendations, while satisfaction surveys like Law360 Pulse measure attorneys’ job satisfaction, working conditions, and career advancement factors across a sample of practicing lawyers.