TL;DR:

- Clear research objectives are essential for valid, actionable data and effective methodology design.

- Choosing the appropriate approach involves balancing quantitative, qualitative, or mixed methods based on goals.

- Ethical safeguards and rigorous planning ensure credible results and avoid costly pitfalls.

Poor research methodology doesn’t just produce bad data. It wastes budget, burns team time, and hands your business decisions over to guesswork. We’ve seen projects derailed not by lack of effort but by unclear objectives, mismatched methods, and skipped validation steps. The good news? A structured, well-designed methodology is absolutely achievable, regardless of scope or budget. Whether you’re running a quick pulse survey or a multi-phase qualitative study, the fundamentals are the same. This guide walks you through every critical step, from defining what you’re actually asking to protecting the integrity of your results.

Table of Contents

- Clarifying your research objectives and questions

- Choosing the right research approach: Quantitative, qualitative, or mixed methods

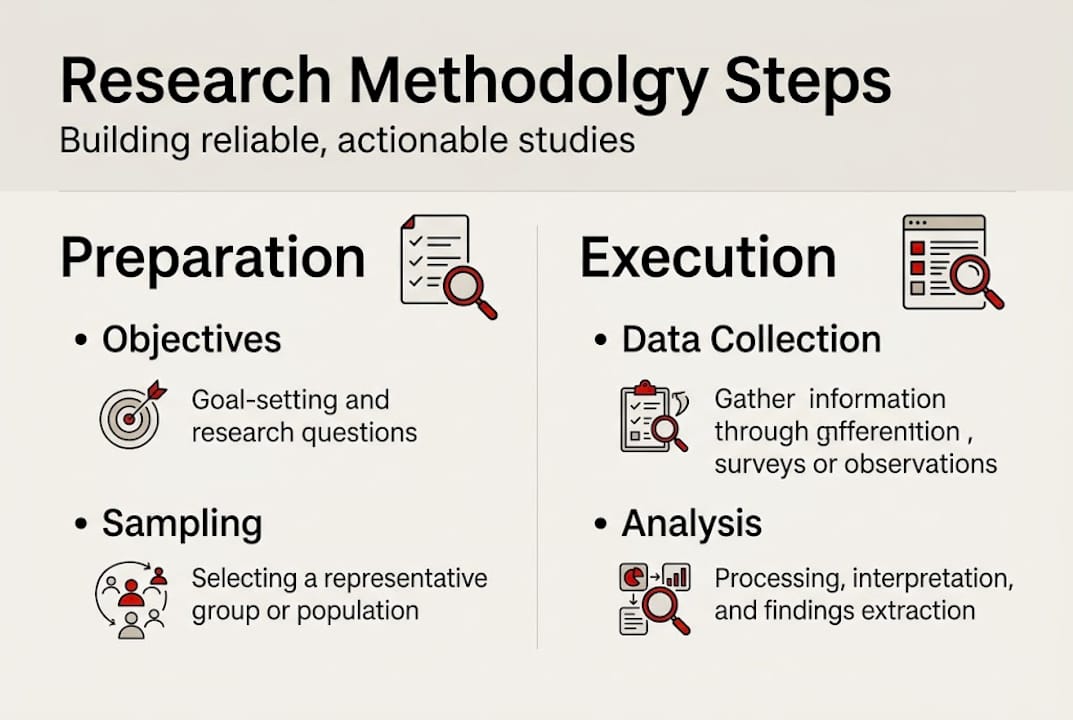

- Designing your study: Sampling, data collection, and analysis details

- Addressing ethics, validity, and common pitfalls

- Real-world lessons: What experts wish they knew about research methodology design

- Get expert support for your research methodology

- Frequently asked questions

Key Takeaways

| Point | Details |

|---|---|

| Start with clear objectives | Your research methodology should be driven by precise, actionable business questions. |

| Match method to purpose | Choose quantitative, qualitative, or mixed approaches based on your research goals. |

| Design for reliability | Thoughtful sampling and robust data collection tools yield trustworthy insights. |

| Prioritize ethics and validity | Address consent and bias, and use power analysis to ensure credible study results. |

| Iterate for success | Pilot testing and stakeholder feedback help refine your methodology for real-world impact. |

Clarifying your research objectives and questions

Before selecting a methodology, you must know exactly what you want to discover. This sounds obvious, but it’s where most projects quietly go off the rails. Fuzzy goals produce fuzzy data. And fuzzy data produces decisions nobody wants to defend in a boardroom.

Research methodology design begins with a clear purpose and research question or hypothesis. That means going beyond “we want to understand customer satisfaction” and getting to “what specific drivers predict repeat purchase behavior among our B2B clients in the healthcare sector?”

Here’s what strong objective-setting actually looks like in practice:

- Define the business problem first. What decision will this research inform? Work backward from that.

- Translate objectives into testable questions. Vague goals become precise questions or falsifiable hypotheses.

- Scope carefully. Scope creep is the silent killer of research timelines and budgets.

- Identify your key variables. What are you measuring, and how will you know when you’ve measured it correctly?

- Use existing literature and past studies to refine your focus and avoid reinventing what’s already known.

For project managers and business leaders, the rule is straightforward: align methodology to objectives. Exploratory purposes favor qualitative approaches. Causal relationships call for quantitative ones. Knowing this early saves significant rework later.

When designing custom research, we always push for stakeholder alignment in the first meeting. Not because it’s a best practice checkbox, but because stakeholders surface the real questions, not just the stated ones.

“The research question is not just a formality. It is the compass that keeps every methodological decision pointed in the right direction.”

Pro Tip: Bring stakeholders in before you write a single survey question. Their input often reveals assumptions baked into the original brief that would have quietly biased your entire study.

For teams working in B2B research, this step is especially critical. B2B audiences are harder to reach and more expensive to engage, so you cannot afford to learn mid-project that you asked the wrong questions.

Choosing the right research approach: Quantitative, qualitative, or mixed methods

With clearly defined objectives, the next step is selecting the methodological framework that best fits your goals. This is one of the most consequential choices you’ll make, and it’s not just a theoretical one.

Quantitative methods use surveys, experiments, and large samples to produce statistically generalizable findings. Qualitative methods use interviews, focus groups, and thematic analysis to produce rich, contextual understanding. Each has a place, and each has real limitations.

Here’s a quick comparison:

| Approach | Best for | Typical sample size | Key strength |

|---|---|---|---|

| Quantitative | Market sizing, segmentation, trend tracking | 30 to 1,000+ | Statistical confidence |

| Qualitative | Brand perception, UX exploration, concept testing | 5 to 50 | Depth and nuance |

| Mixed methods | Innovation research, complex business questions | Varies | Breadth and context |

Quantitative studies typically require a minimum of 30 respondents for basic statistics, though most business studies run far larger. Qualitative research usually reaches saturation, the point where new interviews stop producing new themes, somewhere between 5 and 50 participants.

Mixed methods blend qualitative and quantitative approaches for more complete insights, and they’re particularly useful in complex business settings where you need both the “what” and the “why.”

A common and effective combination: run a quantitative survey to identify trends, then follow up with qualitative interviews to understand the story behind the numbers. This is exactly the kind of approach that makes findings actionable rather than just interesting.

When you’re deciding, ask yourself:

- Do I need to generalize findings to a larger population? Go quantitative.

- Do I need to understand motivations, emotions, or context? Go qualitative.

- Do I need both? Go mixed.

Exploring qualitative vs quantitative approaches in more detail can help you build confidence in this choice. And if you’re still not sure, why you need both is a question worth sitting with before finalizing your design. The mixed-method benefits are real and increasingly recognized in business research contexts.

Designing your study: Sampling, data collection, and analysis details

After selecting the broad approach, rigor in design details ensures the validity of your study. This is where good intentions either become solid research or quietly fall apart.

Follow these steps to build a study that holds up:

- Define your sample frame. Who exactly are you studying? Be specific about inclusion and exclusion criteria.

- Choose your sampling method. Random sampling maximizes generalizability. Purposeful sampling targets specific expertise or experience. Snowball sampling works for hard-to-reach populations.

- Determine sample size. For quantitative sampling, use power analysis to calculate the minimum size needed to detect a meaningful effect. For qualitative, plan for saturation.

- Select your data collection tools. Surveys for quant, in-depth interviews or focus groups for qual, observation for behavioral studies.

- Plan your analysis approach. Statistical methods for quantitative data, thematic or content analysis for qualitative.

- Document everything. Reproducibility depends on clear records of every design decision.

Here’s a simple sampling method reference:

| Method | Use case | Strength |

|---|---|---|

| Random | Large population studies | High generalizability |

| Purposeful | Qualitative, expert audiences | Targeted depth |

| Convenience | Exploratory or pilot studies | Fast and low cost |

| Snowball | Hard-to-reach or niche groups | Access to closed networks |

Good questionnaire design is also a core part of this stage. Reliability starts with how questions are written, ordered, and tested. Ambiguous wording or leading questions will corrupt your data before you even collect a single response.

For teams managing custom methodology across multiple phases, documenting design decisions as you go is not optional. It’s what allows you to defend your findings later.

Pro Tip: Always run a pilot test with a small group before full launch. A 10-person pilot can surface confusing questions, technical issues, and timing problems that would otherwise contaminate your entire dataset.

Addressing ethics, validity, and common pitfalls

Even the best-designed studies can fail without robust ethical and validity safeguards. This is the stage where many research teams feel confident and skip ahead. That’s a costly mistake.

Ethical requirements are not just procedural. They protect your participants and your findings. Key considerations include:

- Informed consent: Every participant must understand what they’re agreeing to, how their data will be used, and how it will be protected.

- Bias minimization: Interviewer bias, question order effects, and sample skew all compromise validity.

- Privacy protection: Store and handle data in compliance with applicable regulations and industry standards.

- Institutional review: If you’re working in healthcare or with vulnerable populations, IRB approval may be required.

Validity techniques that actually work in practice include triangulation (using multiple data sources or methods to confirm findings), pilot studies, and power calculations to ensure your sample is adequate. Controls and baseline measurements can reduce required sample size by up to half. Underpowered studies increase false positives, meaning you’ll draw confident conclusions from noise.

Ethical and valid methodology isn’t just academic rigor. It’s what makes your results defensible and decision-ready. When your findings inform a major product launch or a market entry strategy, the last thing you want is a validity question undermining the whole project.

Common pitfalls to watch for:

- Underpowered samples that can’t detect real effects

- Ignoring confounding variables that distort your findings

- Skipping pretesting and discovering problems after full rollout

- Treating ethics as a checklist rather than a design principle

Pro Tip: Adding a control group or baseline measurement doesn’t just improve your validity. It can actually lower the total number of participants you need, saving time and cost while improving confidence.

For teams interested in how reliable methodology plays out in high-stakes contexts, the bar is even higher. And if you’re looking for full-service research solutions that handle these complexities for you, the right partner makes all the difference.

“Validity isn’t a feature you add at the end. It’s a property you build in from the first line of your research plan.”

Real-world lessons: What experts wish they knew about research methodology design

With the practical steps covered, let’s talk about what separates basic plan-following from truly impactful research. Here’s what we’ve learned from real projects, not textbooks.

Most methodology guides present research as a neat, linear process. In practice, it’s messier and better for it. Iterative design, including cluster allocation adjustments and user-centered refinement, is what expert practitioners rely on to keep studies grounded in real-world conditions.

The teams that get the most value from their research are not the ones who follow a methodology perfectly. They’re the ones who revisit their objectives throughout the project, treating stakeholder feedback as design fuel rather than interference.

Rigid adherence to a method, when the method isn’t serving the purpose, is one of the most common ways smart teams produce weak results. Skipping pilot phases because you’re confident in your design is another. Confidence is good. Testing is better.

Statistical nuance also matters more than most people expect. Adjusting power calculations for different outcome types, or accounting for cluster effects in your design, isn’t just academic. It directly affects whether your findings hold up. We build this kind of thinking into every custom methodology we develop, because the details are where reliability is won or lost.

Get expert support for your research methodology

If you want your next study to be more robust and actionable, support is just a conversation away. Designing a reliable research methodology takes experience, rigor, and the kind of flexible thinking that adjusts to your specific goals without cutting corners on quality.

At Veridata Insights, we offer full-service support for every stage of the research process: consultation and design, methodology, questionnaire review, programming, data collection, data processing and coding, and reporting, analytics, and data visualization. No project minimums. Seven days a week, 365 days a year.

Whether you need a partner for one piece of the puzzle or end-to-end research execution, we’re ready. Speak to a Veridata specialist today and let’s build something worth trusting.

Frequently asked questions

What is the most important first step in designing a research methodology?

Clarifying your objectives and forming a precise research question or hypothesis is the most critical first step. Without this foundation, every methodological choice that follows is built on uncertain ground.

How big should my sample size be for quantitative and qualitative studies?

For quantitative studies, a minimum of 30 participants is the basic statistical threshold, though most studies run larger. Qualitative research typically reaches saturation with 5 to 50 participants depending on the scope and diversity of your audience.

How do I decide between qualitative, quantitative, or mixed methods?

Choose qualitative for exploration and depth, quantitative for testing hypotheses and generalizing findings, and mixed methods when you need both breadth and contextual understanding within a single study.

What ethical factors should I consider?

Informed consent, bias minimization, and participant privacy are the three non-negotiables. You should also follow any relevant institutional or industry guidelines, especially in healthcare or regulated research environments.

Why is pilot testing recommended in research design?

Pilot testing catches design flaws, ambiguous questions, and logistical issues before they infect your full dataset. It’s one of the lowest-cost ways to protect the validity of your entire study.